New global research from TrendLife reveals a wide gap between AI adoption and awareness of AI-powered threats. Across 10,350 respondents in 9 countries, the data tells one consistent story: people are using AI more than ever but feel increasingly underprepared for what that means for their safety and privacy.

Worry about AI outpaces excitement

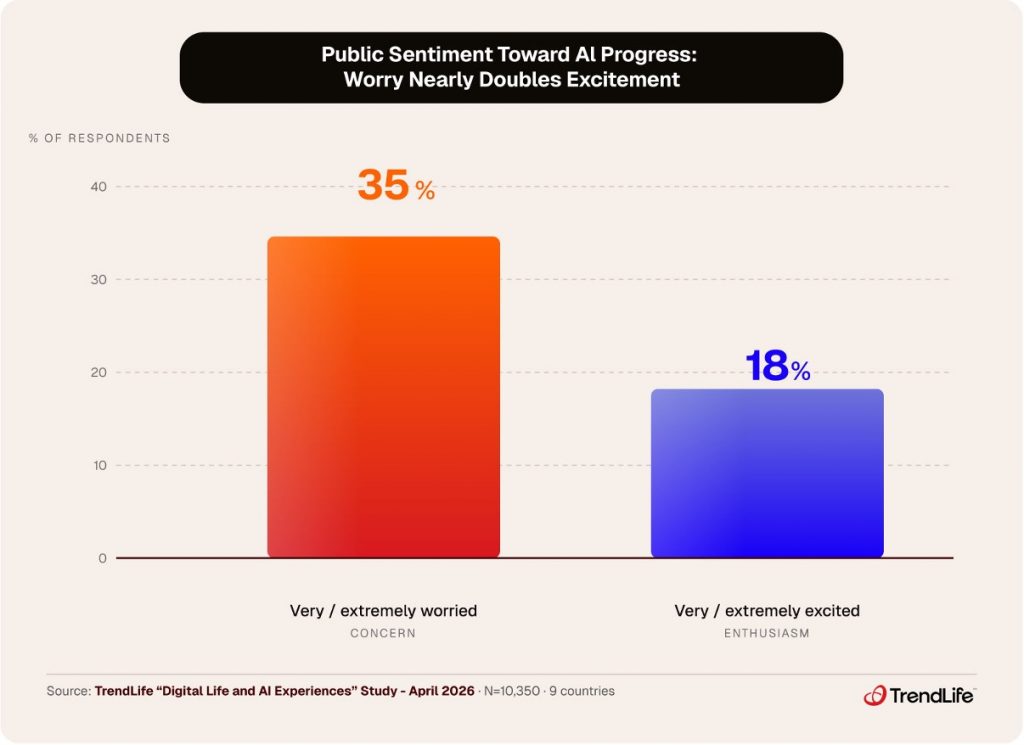

Public sentiment toward AI is more of caution than enthusiasm. Our research found that 35% of global respondents describe themselves as “very” or “extremely” worried about the progress of generative AI. By contrast, only 18% say they feel equivalently excited. Worry nearly doubles excitement.

This is the dominant frame through which most people experience AI right now. For consumer-facing AI products, this matters enormously. Buzzword-filled claims about “robust secure environments” or “next-gen capabilities” without acknowledging the legitimate fear people have will feel tone-deaf. The public is ready to be guided and reassured.

Key takeaway: More than 1 in 3 people globally are “very” or “extremely” worried about AI progress. Consumer trust must be earned, not assumed.

Life events are a hidden vulnerability

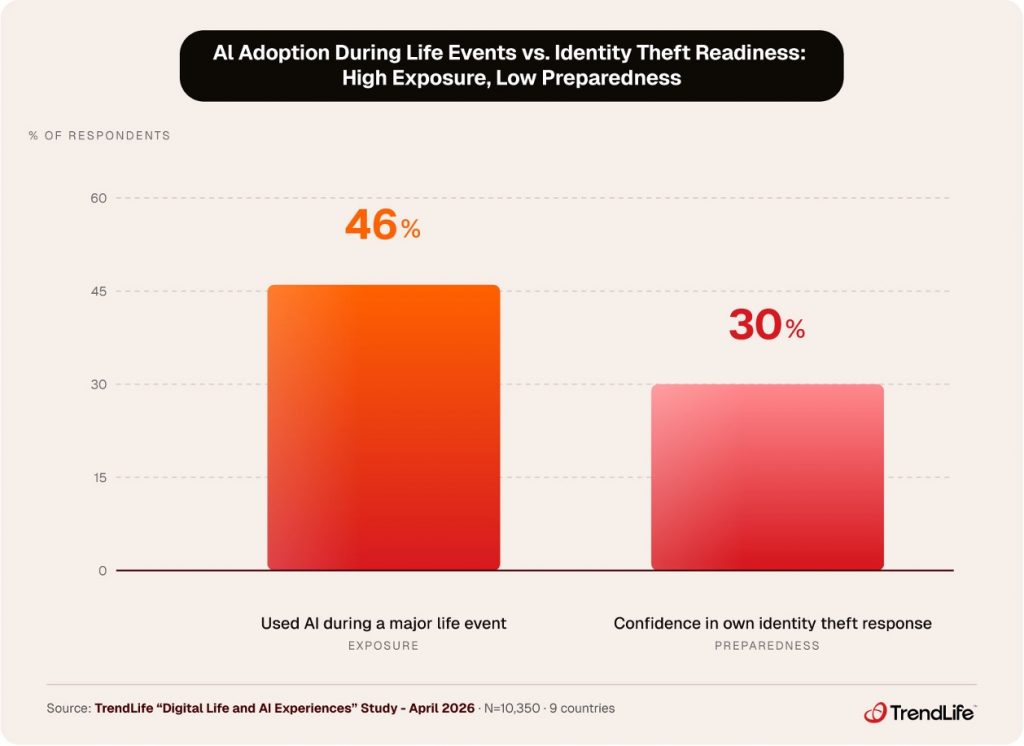

The finding that perhaps most deserves attention is this: 46% of respondents say they have used an AI tool to help navigate a major life event, whether a job search, a home purchase, a retirement transition, or dealing with a loved one’s estate or will.

These are high-stakes moments. People are sharing more sensitive and specific personal information than usual, moving quickly through unfamiliar processes, and often dealing with organizations or platforms they’ve never used before. More than half of respondents (51%) said they needed to share personal data over the internet as part of a recent life event: identification numbers, financial details, dates of birth, contact information, and more.

When respondents were asked which life events they consider most likely to make them a target of cybercrime, they ranked making a large purchase or investment first, followed by applying for government benefits and buying or selling a home. The victimization data tracks with these instincts. Among people who had recently gone through a job search, more than 1 in 5 said they or someone close to them had been the victim of a scam or fraud during that process. For those navigating a large purchase or investment, that figure climbed to nearly 1 in 4.

Yet the proactive protective behavior in these moments is notably weak. Among those who shared personal data as part of a life event, the most common actions taken were enabling two-factor authentication (49%), monitoring bank accounts for suspicious activity (44%), and avoiding public Wi-Fi (44%). These are sensible habits, but they are also largely habitual rather than event-specific. Only 11% sought any form of identity protection service in response to the situation, and nearly 1 in 10 took no protective action at all. For a set of experiences that respondents themselves rank among their highest-risk moments for fraud and identity theft, the gap between perceived risk and deliberate precaution is striking.

Key Takeaway: 46% use AI during major life events. Only 30% are confident about identity theft response. Exposure peaks when readiness is lowest.

People are not equipped to detect AI-generated scams

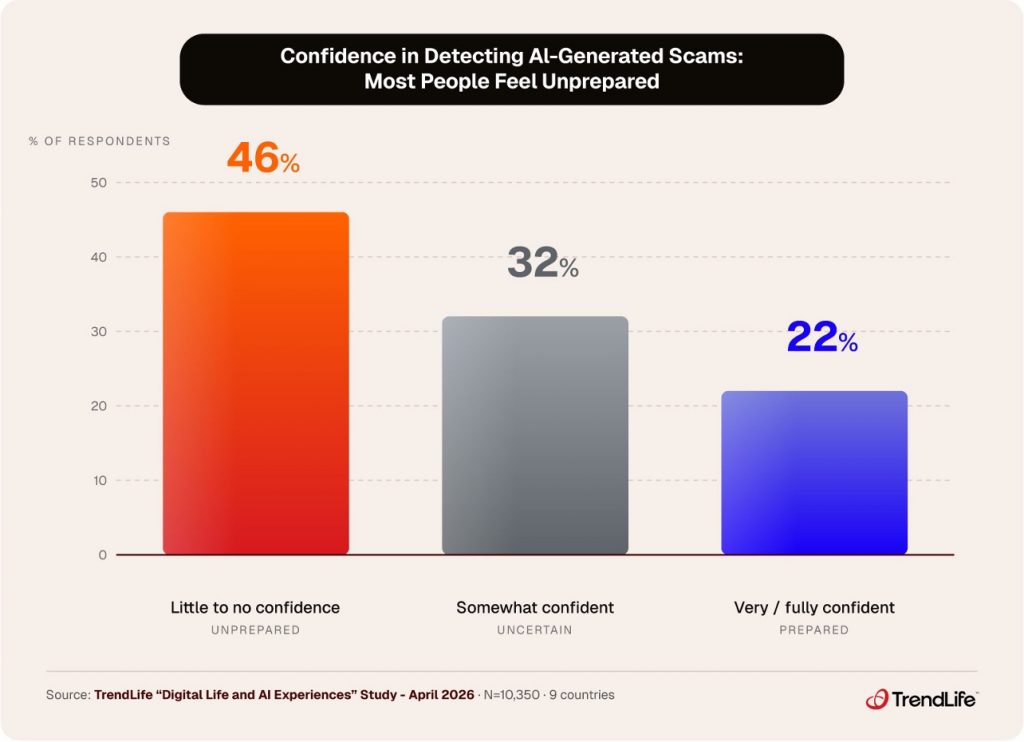

As AI-powered fraud scales up, the gap in public preparedness is significant. Only 22% of respondents say they feel confident they can spot an AI-generated scam or deepfake. Meanwhile, almost half have reported having little or no confidence in their ability to do so. Confidence also falls with age; nearly 4 in 10 respondents aged 18–24 (39%) feel highly confident, compared to fewer than 1 in 10 (9.2%) for those 65+.

It is worth noting that the 22% who do feel confident are self-reporting. Whether that confidence would hold in practice is a different question entirely. Today’s AI-generated scams can clone a voice from only a few seconds of audio, produce deepfake video with lifelike facial movement and natural speech, and generate phishing messages free of the grammatical errors and awkward phrasing that people have historically relied on as warning signs. Feeling prepared and being prepared, in this environment, are not the same thing.

The lack of protective behavior makes this worse. People broadly understand that risks exist but lack the tools and the confidence to act on that understanding.

This is not a niche concern. AI-generated phishing, voice cloning, and deepfake impersonation are operational and scaling. The fraud landscape has changed substantially, but most consumers have not changed with it, and the data suggests they are not sure how to start.

Key takeaway: 46% have little or no confidence spotting AI scams. Only 1 in 5 have ever tried using AI for protection, and fewer still trusted the result.

Nearly 70% of parents want AI safety tools for their kids

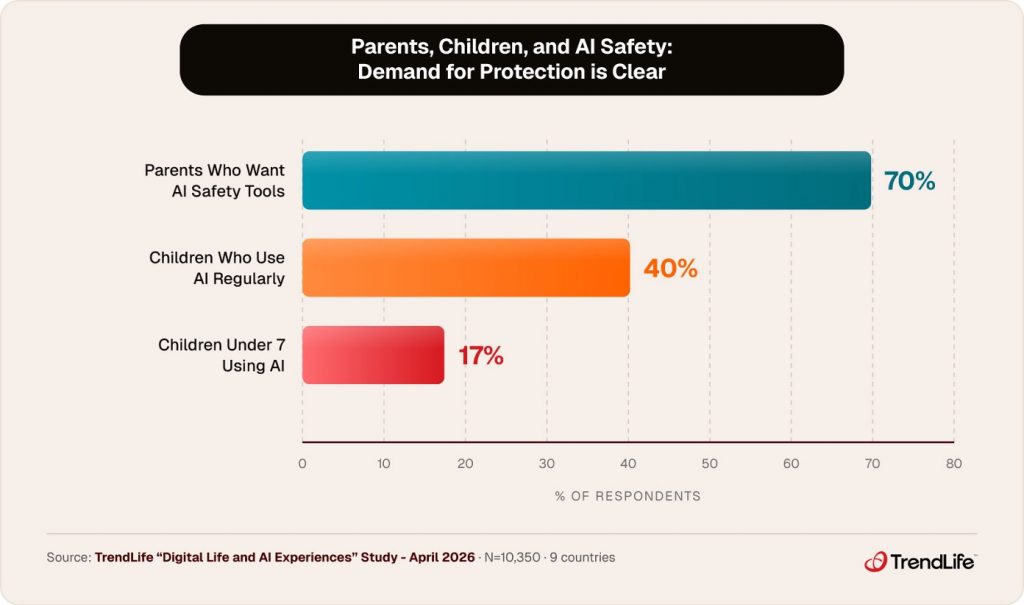

AI concerns abound across age groups, but especially among parents. When asked whether they would use a tool to ensure their children are using AI in a safe and productive manner, 7 in 10 parents said yes. The need is not theoretical; it is explicit.

The adoption data makes this more urgent: 40% of parents say their child already uses AI regularly, and more striking, 17% report AI use by children under 7. Parents are deeply aware of the risks and clearly want an answer to protect their children.

A solution that delivers AI safety for families at scale does not yet exist in a mainstream, trusted product. High adoption, high concern, and explicit demand all point in the same direction.

Key takeaway: 70% of parents want AI safety tools for their kids. 40% of children are already regular AI users, including 17% under age 7.

What this means

The data from our research does not describe a population that is naive about AI. It describes a population that is aware, worried, and largely without the tools to act on that awareness. Worry is the dominant emotion, confidence is low, and demand for protection is high and rising, particularly as the world enters a phase where AI is infiltrating almost every facet of life.

AI scams are not going away. Children are already AI users, life events create compounding vulnerabilities, and the question for any consumer-facing AI brand is straightforward: are you part of the problem, or are you part of the solution people are asking for?

At TrendLife, we are building toward that second option. More to come.

About the Research

TrendLife Global Study: Digital Life and AI Experiences. Online survey conducted March 25–April 3, 2026, among consumers 18+ in Australia, Canada, Ireland, Japan, New Zealand, Singapore, Taiwan, the United Kingdom, and the United States. N=10,350. Data are weighted to be nationally representative of each country by age, gender, and household income.

0 Comments

Other Topics